Everything You Need to Know About Model Hallucinations

Have you ever asked an AI model a simple question—only to get an answer that sounds convincing but turns out to be completely false?

If so, you’ve encountered what’s known as a model hallucination—one of the most common (and potentially dangerous) challenges in working with large language models (LLMs). As these models become more deeply integrated into customer-facing tools, internal workflows, and high-stakes decision-making processes, the risk of hallucinated outputs becomes more than just a curiosity—it becomes a business liability.

In this article, we’ll unpack what model hallucinations really are, why they happen, and—most importantly—how you can detect and reduce them. Whether you're a developer, product owner, or business leader, understanding this phenomenon is key to using LLMs safely and effectively.

Let’s get into it.

What’s in a LLM Hallucination?

No, your language model didn’t wander off with Alice into Wonderland—though it might confidently claim that it did. A model hallucination refers to an LLM’s tendency to generate content that sounds plausible but is entirely fabricated. These can include made-up facts, imaginary citations, or confidently stated misinformation that has no grounding in reality.

Why does this happen? At their core, LLMs aren’t built to understand the world—they’re built to predict the next word in a sentence based on patterns learned from massive datasets.

They’re incredibly good at this, which can create the illusion of intelligence or factual understanding. But their objective isn’t truth; it’s coherence and fluency. That means sometimes, especially when data is sparse or the prompt is ambiguous, they just… make things up.

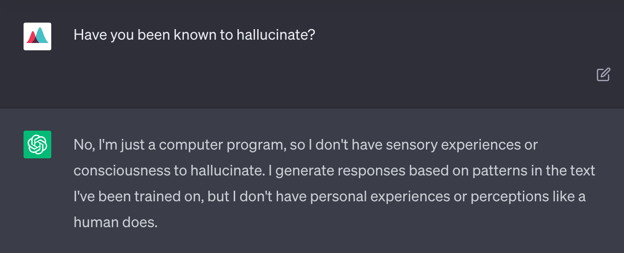

Curious, I asked ChatGPT whether it ever hallucinates. Here's what it said:

As you might expect, it confidently denied the claim. Good news: AGI hasn’t arrived just yet.

But while hallucinations may be amusing in casual use, they’re a serious liability in business-critical applications. Picture an LLM that invents a customer’s bank balance, miscalculates tax filings, or suggests the wrong medical diagnosis. These aren’t harmless errors—they’re the kinds of mistakes that can erode trust, compromise privacy, or even cause real-world harm.

That’s why hallucinations remain one of the most significant challenges facing LLM adoption in high-stakes environments. When accuracy matters, a model that sounds right but isn’t can do more damage than one that simply admits, “I don’t know.”

Detecting and Mitigating AI Hallucinations

Detecting hallucinations can be tricky. Many LLM APIs return the predicted dialogue but do not return any confidence scores associated with their answers. This means that LLMs will sometimes answer questions confidently, even if they don’t actually know the answer. There is a growing body of research work on detecting model hallucinations; see, for example, here, here, and here.

However, this is still a nascent area for which the proposed methods are highly experimental and still in their infancy. Unfortunately, short of creating a brand new LLM architecture, it’s also impossible to prevent model hallucinations before they’re generated.

One way to limit model hallucinations is to provide LLMs with additional context via prompt engineering techniques. For example, a bank using an LLM to facilitate interactions with customers might want to give the LLM access to its internal accounts API so that it can retrieve customer account data.

However, this approach can sometimes backfire as well. The LLM could, potentially, access another customer’s data, perhaps a second customer with the same name, and return it to the wrong user! This introduces a number of privacy concerns.

Another way to combat model hallucinations is to turn down the “temperature” parameter associated with LLM generation. This is possible in the API interface to many LLMs such as GPT-3. This will cause the model to generate more predictable responses in line with its training data, though at the expense of creativity. This might be desirable when using LLMs within a well-regulated business context.

Another sensible approach is to monitor LLM usage at a granular level. While you won’t be able to prevent hallucinations, or perhaps not even detect them right when they arise, you’ll likely be attuned to the downstream effects of hallucinations and be able to trace them back to the source.

Returning to our bank example, if an LLM provides a customer with the incorrect amount of money in their bank account, you could detect subsequent attempts by that customer to overdraw their account and quickly work to remedy the issue when its effect is still negligible.

Finally, you’ll want to monitor for distribution shit and model drift while periodically fine-tuning or retraining the model as necessary. This will make the model less likely to generate responses that are no longer aligned with its training data.

Should Businesses Use LLMs?

That depends on your risk tolerance—and your industry.

In creative, low-risk fields like marketing, customer support, publishing, and design, LLMs are already proving to be powerful collaborators. They can generate content, spark new ideas, and handle repetitive tasks, with hallucinations often being harmless—or even helpful in driving creativity.

But for highly regulated industries like healthcare, finance, or law, the stakes are much higher. A single hallucinated data point could mean a misdiagnosis, a compliance violation, or a breach of customer trust. In these cases, LLMs require careful oversight, robust safeguards, and continuous monitoring to be viable.

Then there’s the gray zone—sectors like banking, accounting, and IT, where LLMs offer enormous potential to streamline workflows and cut costs, but also carry meaningful risk. Here, the difference between success and disaster often comes down to how well the system is monitored and maintained.

If you’re deploying LLMs in production, especially in sensitive workflows, you need more than just great prompts—you need visibility. Mona’s free GPT monitoring solution gives teams the tools to track model behavior, detect hallucinations early, and understand the downstream impact of AI-generated responses. With the right monitoring in place, LLMs become not just usable—but reliable.