Introducing AI fairness powered by Mona

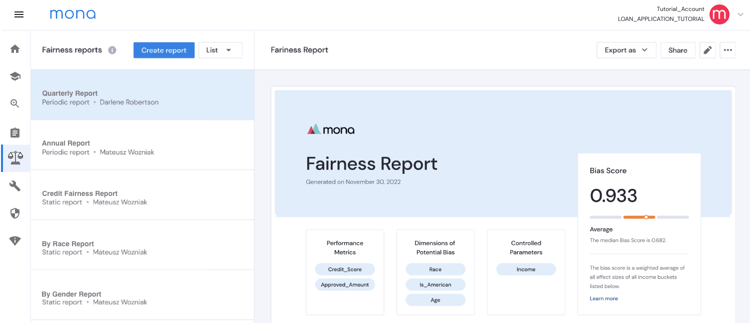

We recently announced our latest innovation to our intelligent monitoring platform - Mona’s new AI fairness feature. This feature assists with eliminating bias with AI-driven applications, increasing trust in your machine learning models in production to ensure compliance readiness.

From Monitoring to Fairness with AI-Driven Applications

Mona's intelligent monitoring solution provides complete visibility into AI systems, automatically detecting potential issues early before they negatively impact your business. Through these insights, you can gain a deep understanding of the behavior of your machine learning models across various protected segments of data, and in the context of the business function that they serve. Until today, these capabilities were used mainly for monitoring purposes, to ensure that your AI is operating as expected, attend to issues before business KPIs are hurt, and vastly improve research capabilities thanks to real-world insights.

Our new AI fairness feature leverages the same analytical engine to detect potential biases and generate fairness reports with full user configuration used for both internal and external auditing purposes.

Fairness in AI

Fairness in AI refers to the concept of creating algorithms and systems that make impartial and unbiased decisions. This is an important and heavily discussed topic nowadays, since AI systems often inherit and amplify biases present in the data they are trained on, resulting in discriminatory outcomes.

One of the major causes of biases in AI systems is the biased data that they are trained on. This can stem from historic discrimination, under-representation of certain groups, or even intentional manipulation of data. For instance, a facial recognition system trained on predominantly light-skinned individuals is likely to have a higher error rate in recognizing individuals with darker skin tones. Similarly, a language model trained on online text data may develop biases based on gender, race, or religion if the data is skewed towards certain perspectives.

Addressing and preventing biases in AI systems is crucial, as they can result in significant real-world consequences. Biased algorithms can perpetuate historical inequalities and cause discriminatory outcomes in many areas, leading to distrust in the system.

Main Challenges in Assessing AI Biases

1. You need to look at the actual system's behavior

Until recently, most approaches to evaluating biases revolved around auditing the data used to train the model, such as ensuring diversity and adequate representation of protected groups. While this is an important thing to do, this approach has its limitations.

The only real way to make sure an AI-based system is fair, is to monitor its actual behavior in the real world, and make sure that the decisions it makes are fair. This is due to the fact that there are always discrepancies between the way the model is trained and what it encounters in production.

This is similar to the approach of evaluating human decision-makers, as one would not solely rely on their training but rather assess their actual decisions in real-world situations. Monitoring the actual behavior in real-world situations is the only effective method to guarantee fairness in AI systems.

2. You need to avoid “Simpson’s Paradox”

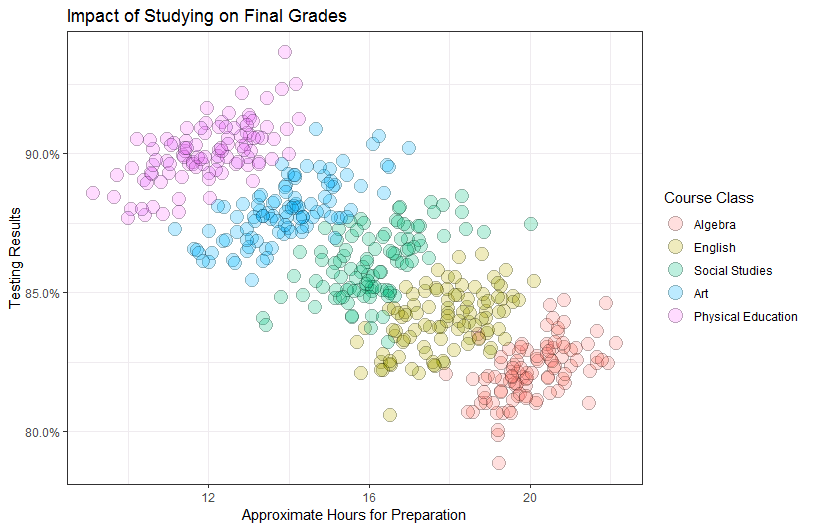

Simpson's Paradox is a statistical concept that refers to a situation where the relationship between two variables appears to be different when analyzed in aggregate compared to when they are analyzed within individual subgroups. In other words, the trend in the overall data can be opposite from the trend in smaller groups.

For example, there might be a negative correlation between the number of hours studied and the exam scores for a large group of students. However, when you break down the data and analyze it for individual classes, the relationship between the number of hours studied and exam scores becomes positive for each class.

Looking at this data naively might cause you to assume that studying less will result in a better grade. However, a deeper understanding that different classes demand varying hours of study time for an exam reveals the opposite conclusion: that studying more leads to a better grade.

Similar challenges exist when evaluating the behavior of AI models. In order to avoid Simpson’s Paradox, you must have a comprehensive system that controls relevant parameters, allowing for accurate assessments of model bias on protected features and groups.

Mona’s AI Fairness Solution

Luckily, Mona is precisely designed to address these challenges.

First of all, it connects to all parts of the business process that the AI model serves in production, rather than focusing solely on the model or just on the training data. It can therefore correlate between training data, model behavior, and actual real-world outcomes in order to provide relevant metrics for detecting biases and assessing fairness.

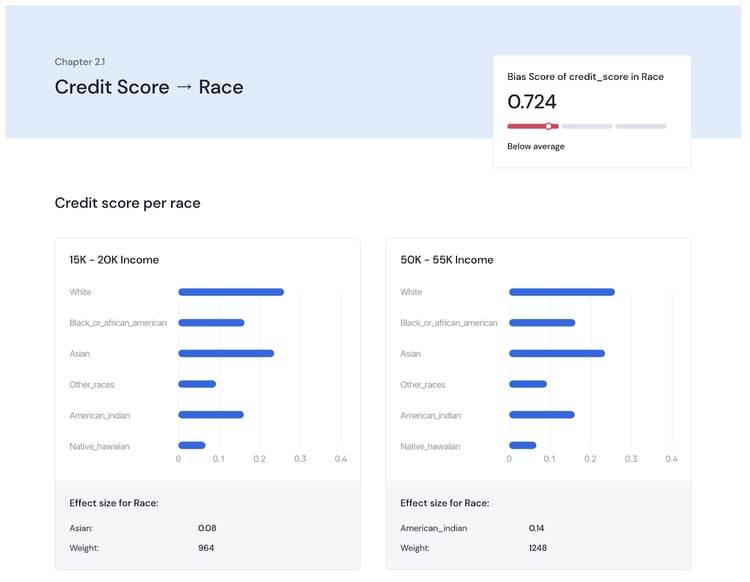

Second, it has a one-of-a-kind analytical engine that allows for flexible segmentation of the data to control relevant parameters. This enables accurate correlations assessments in the right context, avoiding Simpson’s Paradox and providing a deep real “bias score” for any performance metric and on any protected feature.

With Mona, you can be confident that your AI is performing well and acting fairly, ensuring compliance readiness and giving you peace of mind that your AI is free of any biases. Contact us today to learn how your business can benefit from our new AI fairness feature.